Architecting for Change Part IV: How Accidental Complexity Gets Into a System

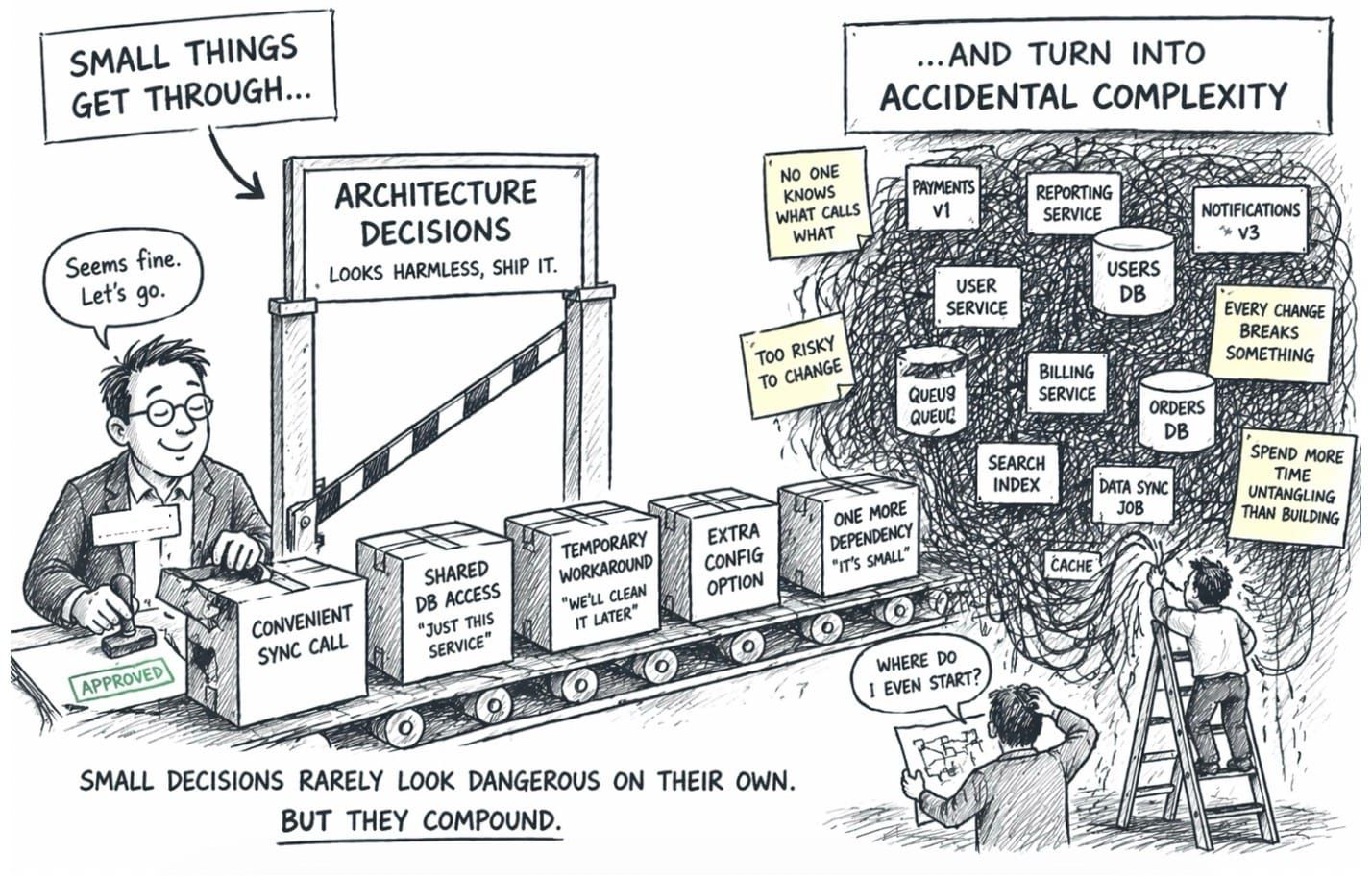

Accidental complexity rarely announces itself.

It usually arrives as drag. Changes that should be straightforward take longer than expected. Teams need more coordination to deliver less. Confidence in change slowly drops. Workarounds appear, dependencies multiply, and the system becomes harder to understand, evolve, and trust.

That is usually when people say the architecture has become “complex”. But by then, the problem has often been building for a while.

As I said in the previous post in this series, essential complexity is the complexity inherent in the domain. Accidental complexity is everything else. Some of it comes from the world we work in: tools, platforms, frameworks, and runtime environments. That kind can often be reduced, but not completely eliminated.

The more interesting kind is the complexity we introduce ourselves. It comes from poor boundaries, weak ownership, muddled decisions, unnecessary dependencies, excessive optionality, and process overhead masquerading as control. That is self-inflicted complexity, and once it gets into a system, it rarely stays contained. It spreads.

Adding complexity is easy. It enters through decisions that seem reasonable at the time. Removing it is much harder.

Martin Reeves puts it well:

“Creating and reducing complexity may sound like perfect opposites. But in fact fundamental asymmetries exist between the two.”

Accidental complexity enters locally, accumulates systemically, and is often reinforced organisationally.

It enters through locally sensible decisions

A lot of accidental complexity enters through decisions that do not just look harmless, but sensible in isolation.

That is part of the problem. Each decision is easy to justify locally because the cost is small in the moment and the benefit is immediate. But the cost does not stay local. It accumulates across the system.

Sometimes the shortcut is a dependency added because it was faster than thinking through the boundary. Sometimes it is a shared data structure, a database table reached across a boundary, or an integration that works technically but weakens the model.

Sometimes it is a decision we refuse to make.

Keeping options open can be sensible when uncertainty is high and the number of viable options remains small. Beyond that, optionality starts to turn into muddle. We add configuration because we do not want to choose. We support too many variations because nobody wants to disappoint anyone. We call it flexibility, but often it is just complexity with a friendlier name.

I have seen systems built this way. Huge codebases with almost endless configuration. In theory, they are flexible. In practice, they are confusing. Adopters with simple needs see them as overkill, while the ones with genuinely complex needs still find them time-consuming to configure, difficult to understand, and risky to change.

That is not flexibility. It is deferred decision-making turned into system design.

None of these decisions has to be catastrophic on its own. That is what makes them dangerous. They change the shape of the system gradually. Coupling increases, ownership blurs, and ordinary work starts to require more coordination than it should. Over time, teams stop just changing software and start negotiating their way through it.

The customer rarely sees the dependency graph, the unclear ownership model, or the overloaded team. They see slower change, more defects, less reliability, and a business that cannot respond as quickly as it should.

Poor boundaries let it spread

Poor boundaries are one of the easiest ways for accidental complexity to enter a system.

A boundary is not useful just because it exists technically. Splitting something into smaller parts does not make the system simpler. Sometimes it does the opposite. It creates more moving parts while leaving the real coupling untouched.

Good boundaries do not remove complexity. They put it where it belongs. They allow teams to own a coherent part of the domain, make decisions locally, and integrate with others through explicit relationships rather than accidental dependencies.

That is why domain boundaries matter. A bounded context is not just a technical partition. It is a boundary around a model, a language, a set of business rules, and the team that can evolve them with confidence.

When those boundaries are weak, the model leaks. Teams begin sharing data structures, databases, assumptions, knowledge, and implementation details. The system may be distributed physically, but it becomes tangled logically.

The result is familiar. A change in one place unexpectedly affects another. Teams need to coordinate on things that should have been local. Integration becomes less about clear contracts and more about excessive coordination and hope.

That is how you end up with systems that look decomposed on a diagram but still behave like a tightly coupled mess in practice.

Cognitive load tells you when ownership is no longer real

Accidental complexity also grows when cognitive load rises too far.

Every service has an upper bound of what the team can comfortably own. That upper bound is not just technical. It is organisational too. It is the point at which the team can no longer hold enough of the service, its dependencies, its failure modes, and its change surface in its head to evolve it comfortably.

A team may technically own a service while no longer being able to change it with confidence. That is an important distinction.

Once that point is reached, the system may still function, but change becomes slower, riskier, and more tiring. People avoid the parts they do not fully understand. They copy what already exists because it feels safer than rethinking it. They add local fixes because unpicking the real problem feels too expensive.

The service becomes harder to change, not because the domain suddenly became more complex, but because the team can no longer navigate the shape of what has been built.

That is when accidental complexity starts to compound. Workarounds increase, comprehensibility suffers, duplication grows, and lead times get worse. Complexity is no longer a set of isolated design problems. It becomes the operating environment.

Conway’s Law decides where the pain shows up

When technical boundaries do not align with team boundaries, complexity gets pushed into coordination, hand-offs, ambiguity, and delay. This is not just poor ownership. It is Conway’s Law being ignored.

The organisation will shape the architecture whether we acknowledge it or not. You do not get to opt out of that. If communication patterns, ownership boundaries, and team structures cut across the design, the software will reflect it.

What looked like a clean technical boundary on a diagram becomes an awkward social boundary in practice.

That is why ignoring Conway’s Law is so costly. The problem is not simply that people need to talk more. It is that the architecture starts working against the way the organisation actually communicates and operates.

This shows up in obvious places, such as teams organised around functional silos: UI, backend, database, testing, deployment. Each group optimises for its own part of the process, and the real cost is paid in hand-offs, queues, rework, and unclear ownership.

It also shows up in less obvious ways. Organisational politics, personal friction, or local management incentives can lead to “Separate Ways” integrations, not because that is the best design choice, but because the people involved do not want to collaborate.

The architecture then records the social reality of the organisation, whether intentionally or not.

Each decision may make sense from where a team is standing. Together, they create more accidental complexity. The architecture stops being something the organisation can work with and becomes something it has to fight.

Short-term incentives make complexity rational

This is often worse in organisations driven by short-term delivery structures, whether that takes the form of feature-throughput pressure or project-based work.

The reason is simple. Teams are often incentivised to complete the work in front of them, not to live with the long-term consequences of the decisions made to deliver it. In project-based organisations, that problem is often sharper still because the team itself may be temporary. The project ends, people move on, and the system is left carrying the cost.

So the system gets shaped by short-term delivery pressure. Dependencies are introduced because they are expedient. Boundaries are crossed because it is quicker. Code is changed wherever it needs to be changed because nobody has clear enough stewardship to say no.

In theory, this looks like flexibility and speed. In practice, it often means distributed coupling, blurred ownership, and architectural drift.

Foundational capabilities are especially vulnerable to this. They are often treated like project deliverables, even though they need to evolve into supported, reliable, reusable capabilities. If they are important enough for many teams to depend on, they are important enough to have clear ownership, investment, and a direction of travel.

That is why short-term delivery models can be such effective generators of accidental complexity. They do not just neglect the shared and cross-cutting parts. They push short-term incentives into the design of the whole system.

Final thoughts

Accidental complexity is not just a technical design problem. It is a socio-technical one.

It grows when locally sensible decisions are allowed to accumulate without good boundaries, real ownership, manageable cognitive load, and long-term stewardship.

The environment matters because it decides which behaviours are easy to repeat and which ones require effort to resist. If the environment rewards shortcuts, hand-offs, local optimisation, and weak ownership, it will not just preserve accidental complexity. It will amplify it.

The natural response in many organisations is to add more control: more review boards, more approval steps, more meetings, and more gates. That response is understandable, but it often makes the problem worse.

Heavyweight governance can become another source of accidental complexity in its own right.

That is what I will look at next.