Microservices Did Not Fail. The Boundaries Did.

This post comes off the back of a week of seeing the same old “microservices are bad, monoliths are good” arguments. In some contexts, that reaction is understandable. If people have lived through a painful microservice architecture, it is not surprising that they want to pull things back.

But I think the argument often misses the point.

The problem is rarely just the technology. It is usually the interaction between boundaries, ownership, team structure, and the way change has to flow through the organisation.

I have been meaning to write about this for a while. Once I started, it was obvious this needed to be a series rather than one overloaded post.

The posts in this series are not just based on my own experience. They are also shaped by a few excellent talks and articles that I will link to at the end of the series.

The familiar conversation

There has been a familiar conversation doing the rounds again.

“We have gone too far down the microservices route.”

“It is not working.”

“We need to rein it back.”

“We should focus on modular monoliths instead.”

I have heard this in a few organisations now. Sometimes the wording changes, but the pattern is usually the same. Teams are dealing with too many services, too many dependencies, too much coordination, too many pipelines, too many dashboards, and too many failures that seem to move from one place to another. At some point, people stop saying “we have a design problem” and start saying “microservices have failed”.

But that is not the right diagnosis.

That does not mean microservices were the right choice everywhere. They often are not. Microservices have a premium. You pay for them in operational complexity, distributed failure modes, testing difficulty, debugging complexity, observability, deployment pipelines, versioning, data ownership, and team coordination.

If you do not need or cannot take advantage of the benefits, why pay the premium?

When microservices are not really microservices

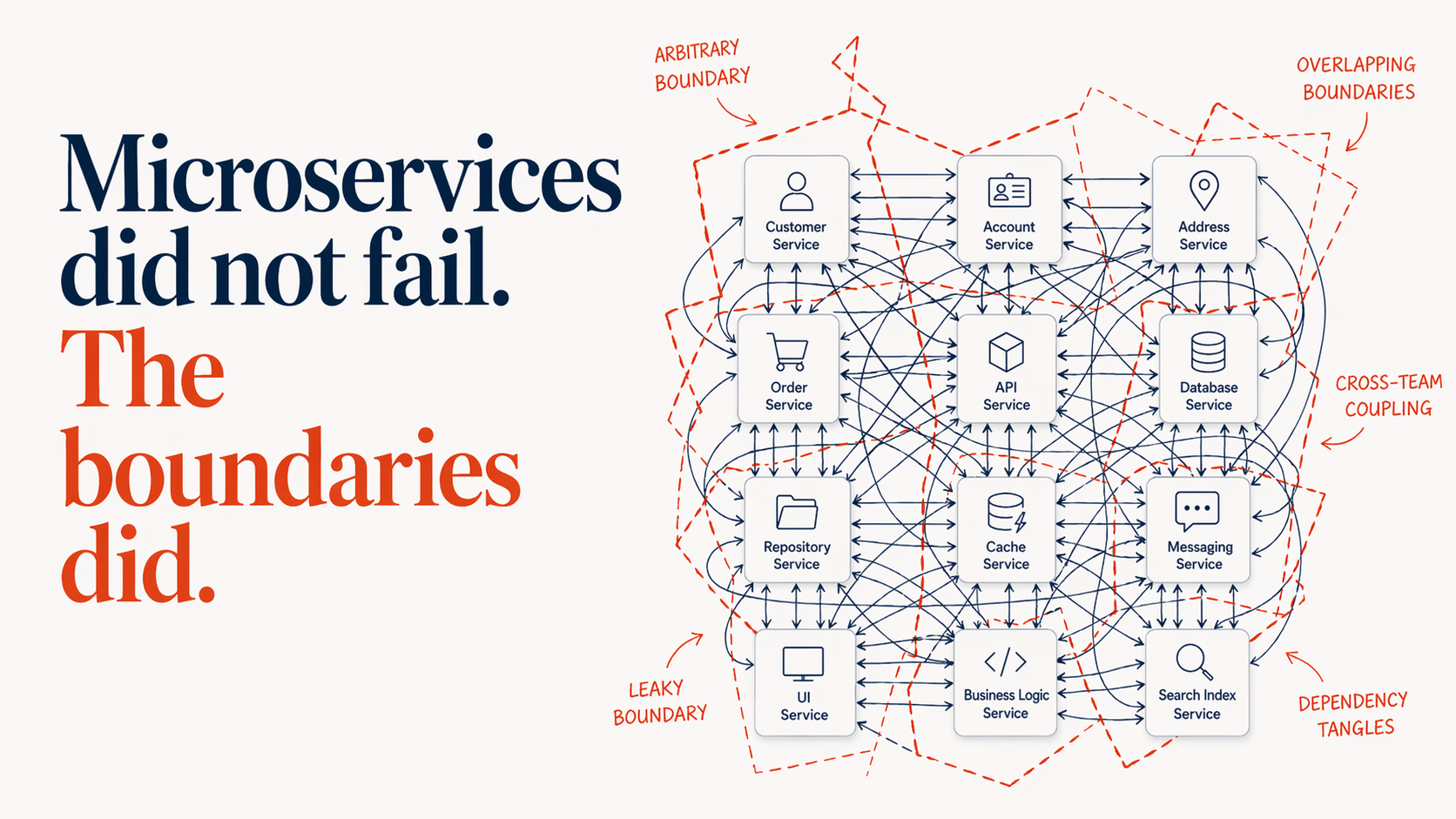

In many cases I have seen, the problem was not microservices as an architectural style. It was that what got built was not really microservices at all, but a set of distributed objects, CRUD endpoints, entity-based services, shared database wrappers, or tiny technical services that happened to be deployed separately.That is a very different thing.

A microservice is not just a small web API. It is not a container. It is not a table with an endpoint in front of it. It is not a function deployed to the cloud. It is definitely not a service smaller than X lines of code.

Some of those may be ways to partition the system, but they do not provide good boundaries on their own. The important question is not whether the system was partitioned. It is what criteria were used to partition it. If we split arbitrarily, we should not be surprised when the result is a distributed mess.

A useful microservice implements a meaningful boundary. One shaped around a business capability or a cohesive part of the domain: an area where the problem can be solved, changed, and owned with a reasonable degree of independence. It can be deployed independently without dragging half the estate with it. It has clear ownership of its model and the data it is responsible for. It can tolerate failure around it. It can be tested independently. It has enough operational maturity that teams can understand what it is doing in production.

If that is not true, the service may still be deployed separately, but the separation is mostly physical. Architecturally, the system is still tightly coupled. We have just moved the coupling onto the network, where it is slower, less reliable, harder to see, and more expensive to change.

Physical separation is not architectural separation

That is how microservices go wrong.

They go wrong when we split the system into separately deployed parts and assume the architecture has improved.

They go wrong when every business concept becomes a deployable unit, every entity gets an API, and every change requires a chain of synchronous calls across five teams.

They go wrong when we mistake entities for service boundaries. A subscription, customer, property, invoice, or order may be important to the business, but that does not automatically make it a good service boundary. If the same concept appears in several parts of the business with different meanings, behaviours, and rules, turning it into one central service may create exactly the coupling we were trying to avoid.

They go wrong when services are split around technical layers rather than business capabilities. One team owns the front end, another owns the API, another owns the database, another owns the integration, and then we wonder why a small product change needs a project plan.

They go wrong when services share databases because “it is quicker for now”, and then nobody can change the schema without a negotiation.

They go wrong when one service calls another as if it were an in-memory object, and then that service calls another, and then that one calls three more. The code looks simple locally, but the runtime behaviour is a distributed obstacle course.

They go wrong when the boundaries reflect team availability rather than domain boundaries. A new team is formed, so a new service is created. The service exists because the organisation needed somewhere to put the work, not because the domain had a natural seam.

They go wrong when nobody owns the service as a product or capability. A project creates it, hands it over, and moves on. Six months later, every team depends on it, nobody understands it, and changing it becomes an archaeological exercise.

And they go badly wrong when the organisation adopts the deployment cost of microservices without also adopting the engineering and operating practices that make them viable.

A microservice architecture without strong delivery automation, observability, contract discipline, resilience patterns, clear ownership, and sensible platform support is not modern architecture. It is distributed hope.

Why modular monoliths sound attractive

This is why the current reaction is understandable. When people experience all of that pain, “let’s go back to modular monoliths” sounds sensible. In some cases, it may be the right move. A modular monolith can be a very good architectural choice, especially when the domain boundaries are still moving, the team structure is not settled, or the cost of distribution is not justified.

But a modular monolith does not remove the need for good design.

It still needs boundaries, cohesion, clear ownership, a model that reflects the domain, and discipline around dependencies.

The main advantage of a modular monolith is not that boundaries matter less. It is that getting boundaries wrong is cheaper.

If two modules live within the same deployable system and the boundary turns out to be wrong, refactoring may still be painful, but it is usually cheaper and safer to correct. If those same modules have already been split into separate services with separate data stores, separate deployment pipelines, separate operational ownership, and live consumers, the same correction becomes much harder. You are no longer just moving code. You are moving behaviour, migrating data, preserving compatibility, coordinating releases, and avoiding breakage for downstream consumers.

That is why modular monoliths can be attractive. They do not make poor boundaries good, but they can make poor boundaries cheaper to correct. A big ball of mud is bad. A distributed big ball of mud is worse. But the fact remains: you still need good boundaries.

The deployment shape is not the real decision

That is why the answer is not simply “microservices” or “modular monoliths”. The real decision is where the boundaries are, what they are there to keep cohesive, and what trade-offs we are willing to accept. That, in turn, helps us decide which boundaries really need to be deployed separately.

The first question is not whether something can be split out. It is whether it should be. Does it represent a meaningful boundary? Can the problem on either side be solved with a reasonable degree of independence? Can a team own it end-to-end without dragging half the organisation into every change?

Those are not the only things that shape a good boundary. Domain cohesion, cognitive load, communication structure, and coordination cost matter too. I will cover those in the next posts. The point here is simpler: find the right boundaries first. Only then should you decide whether any of them need separate deployment.

But that does not come for free, so there should be a clear reason for paying that cost. It usually makes sense when different parts of the system have significantly different:

- scalability and throughput needs

- availability and operational characteristics

- fault-tolerance requirements

- rates of change

- security and compliance constraints

Those are not reasons to prefer “microservices”. They are reasons a boundary may need separate deployment.

We are not supposed to be building “microservices” or “monoliths” as if those are the outcome that matters. We are supposed to solve business and design problems well, and choose a deployment shape that fits.

Sometimes the right answer is to keep a well-defined boundary inside one deployable. Sometimes the right answer is to deploy that boundary separately. Sometimes the right answer changes over time.

But if the real problem is unclear ownership, weak design, poor team alignment, slow delivery pipelines, and high accidental complexity, those things need attention first. Otherwise, “monolith” or “microservices” just becomes a choice between a big ball of mud and a distributed big ball of mud.

Final thoughts

The real question is not whether microservices or modular monoliths are better. The real question is whether our boundaries are shaped around the problems that matter, and whether the way we organise teams, ownership, and delivery supports those boundaries or quietly works against them.

If the boundaries are poor, both styles will eventually become painful. One will just make the pain distributed.

In the next post, I’ll look at how we find better boundaries: not just in code, but across the domain, team structure, ownership, and the wider organisational forces that shape how change happens.